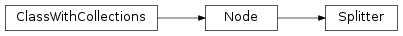

mvpa2.featsel.rfe.Splitter¶

-

class

mvpa2.featsel.rfe.Splitter(attr, attr_values=None, count=None, noslicing=False, reverse=False, ignore_values=None, **kwargs)¶ Generator node for dataset splitting.

The splitter is configured with the name of an attribute. When its

generate()methods is called with a dataset, it subsequently yields all possible subsets of this dataset, by selecting all dataset samples/features corresponding to a particular attribute value, for all unique attribute values.Dataset splitting is possible by sample attribute, or by feature attribute. The maximum number of splits can be limited, and custom attribute values may be provided.

Notes

Available conditional attributes:

calling_time+: Time (in seconds) it took to call the noderaw_results: Computed results before invoking postproc. Stored only if postproc is not None.

(Conditional attributes enabled by default suffixed with

+)Attributes

descrDescription of the object if any pass_attrWhich attributes of the dataset or self.ca to pass into result dataset upon call postprocNode to perform post-processing of results spaceProcessing space name of this node Methods

__call__(ds[, _call_kwargs])The default implementation calls _precall(),_call(), and finally returns the output of_postcall().generate(ds)Yield dataset splits. get_postproc()Returns the post-processing node or None. get_space()Query the processing space name of this node. reset()set_postproc(node)Assigns a post-processing node set_space(name)Set the processing space name of this node. Parameters: attr : str

Typically the sample or feature attribute used to determine splits.

attr_values : tuple

If not None, this is a list of values of the

attrused to determine the splits. The order of values in this list defines the order of the resulting splits. It is possible to specify a particular value multiple times. All dataset samples with values that are not listed are going to be ignored.count : None or int

Desired number of generated splits. If None, all splits are output (default), otherwise the number of splits is limited to the given

countor the maximum number of possible split (whatever is less).noslicing : bool

If True, dataset splitting is not done by slicing (causing shared data between source and split datasets) even if it would be possible. By default slicing is performed whenever possible to reduce the memory footprint.

reverse : bool

If True, the order of datasets in the split is reversed, e.g. instead of (training, testing), (training, testing) will be spit out.

ignore_values : tuple

If not None, this is a list of value of the

attrthe shall be ignored when determining the splits. This settings also affects any specifiedattr_values.enable_ca : None or list of str

Names of the conditional attributes which should be enabled in addition to the default ones

disable_ca : None or list of str

Names of the conditional attributes which should be disabled

space : str, optional

Name of the ‘processing space’. The actual meaning of this argument heavily depends on the sub-class implementation. In general, this is a trigger that tells the node to compute and store information about the input data that is “interesting” in the context of the corresponding processing in the output dataset.

pass_attr : str, list of str|tuple, optional

Additional attributes to pass on to an output dataset. Attributes can be taken from all three attribute collections of an input dataset (sa, fa, a – see

Dataset.get_attr()), or from the collection of conditional attributes (ca) of a node instance. Corresponding collection name prefixes should be used to identify attributes, e.g. ‘ca.null_prob’ for the conditional attribute ‘null_prob’, or ‘fa.stats’ for the feature attribute stats. In addition to a plain attribute identifier it is possible to use a tuple to trigger more complex operations. The first tuple element is the attribute identifier, as described before. The second element is the name of the target attribute collection (sa, fa, or a). The third element is the axis number of a multidimensional array that shall be swapped with the current first axis. The fourth element is a new name that shall be used for an attribute in the output dataset. Example: (‘ca.null_prob’, ‘fa’, 1, ‘pvalues’) will take the conditional attribute ‘null_prob’ and store it as a feature attribute ‘pvalues’, while swapping the first and second axes. Simplified instructions can be given by leaving out consecutive tuple elements starting from the end.postproc : Node instance, optional

Node to perform post-processing of results. This node is applied in

__call__()to perform a final processing step on the to be result dataset. If None, nothing is done.descr : str

Description of the instance

Attributes

descrDescription of the object if any pass_attrWhich attributes of the dataset or self.ca to pass into result dataset upon call postprocNode to perform post-processing of results spaceProcessing space name of this node Methods

__call__(ds[, _call_kwargs])The default implementation calls _precall(),_call(), and finally returns the output of_postcall().generate(ds)Yield dataset splits. get_postproc()Returns the post-processing node or None. get_space()Query the processing space name of this node. reset()set_postproc(node)Assigns a post-processing node set_space(name)Set the processing space name of this node. -

generate(ds)¶ Yield dataset splits.

Parameters: ds: Dataset

Input dataset

Returns: generator

The generator yields every possible split according to the splitter configuration. All generated dataset have a boolean ‘lastsplit’ attribute in their dataset attribute collection indicating whether this particular dataset is the last one.